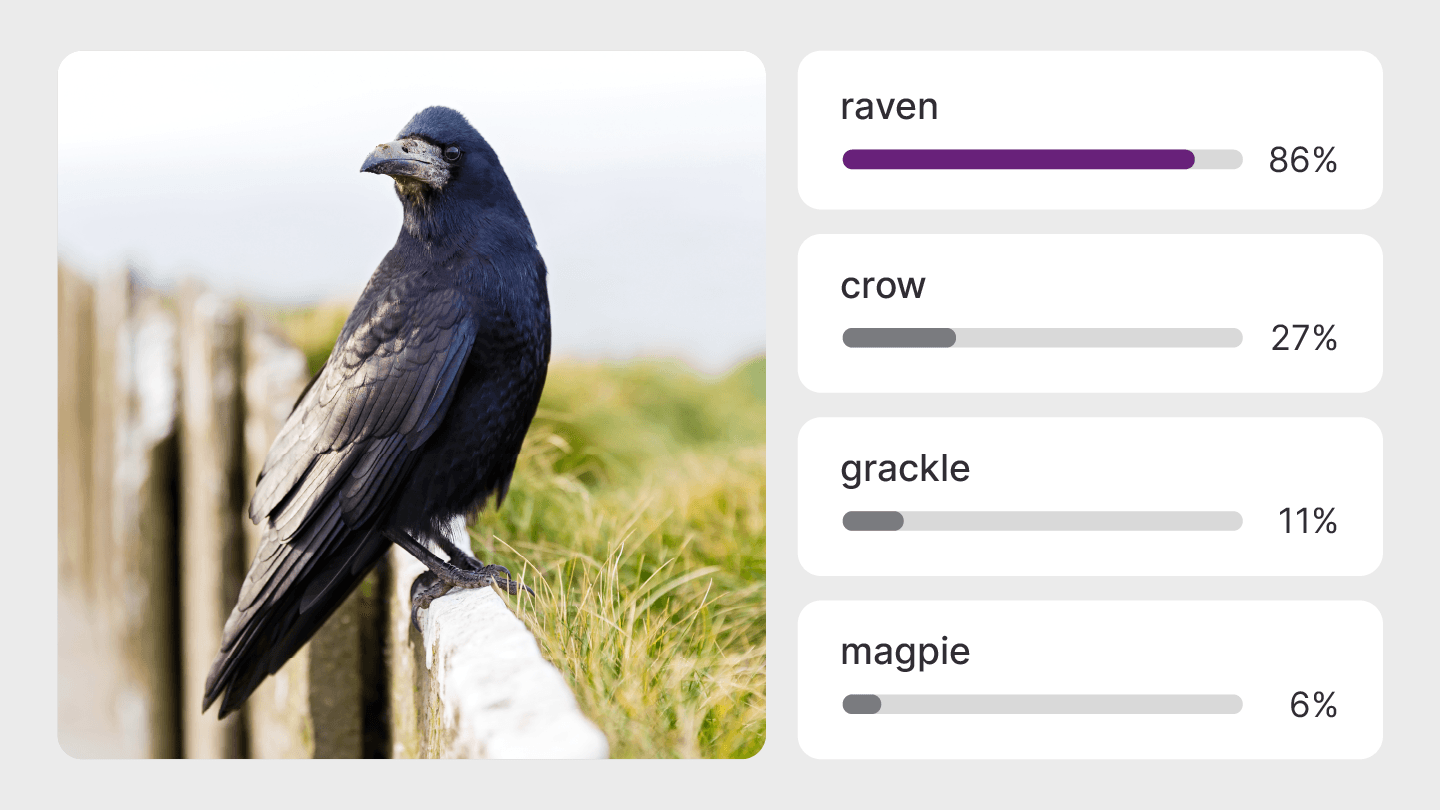

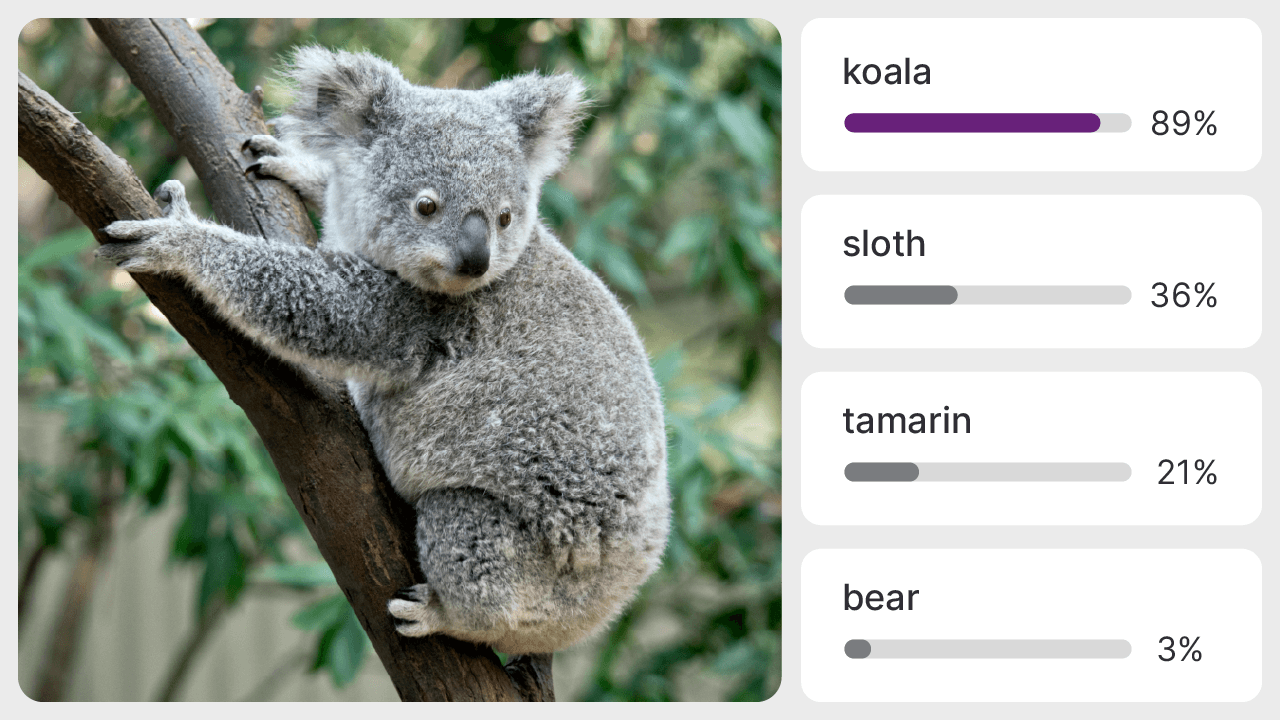

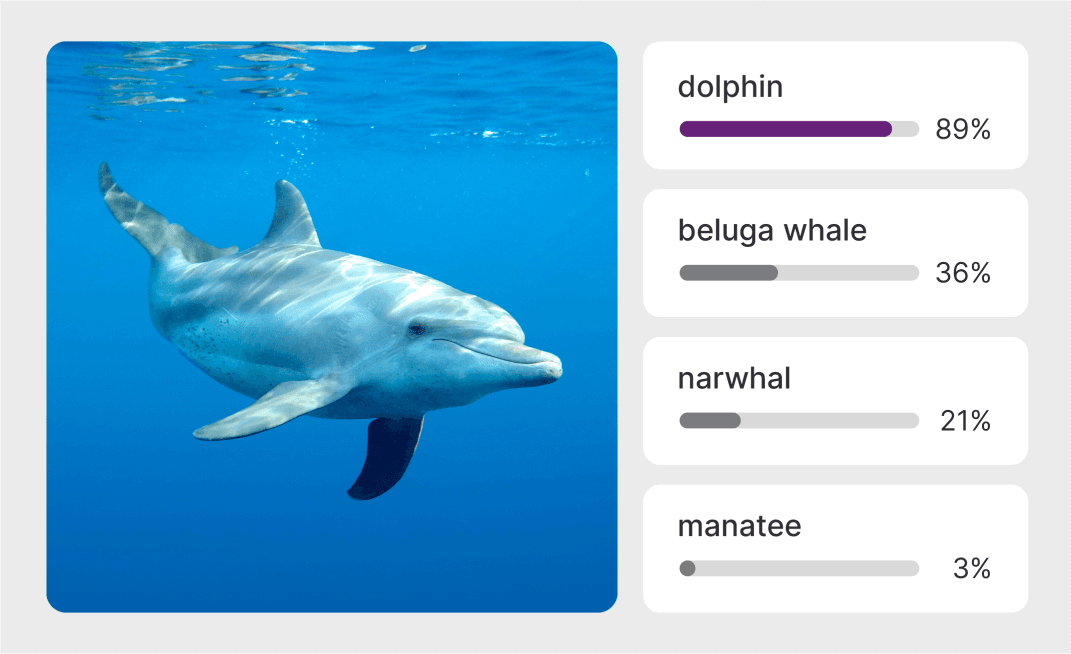

ConvNext-Tiny-W8A16-Quantized

Imagenet classifier and general purpose backbone.

ConvNextTiny is a machine learning model that can classify images from the Imagenet dataset. It can also be used as a backbone in building more complex models for specific use cases.

Technical Details

Model checkpoint:Imagenet

Input resolution:224x224

Number of parameters:28.6M

Model size:28 MB

Precision:w8a16 (8-bit weights, 16-bit activations)

Applicable Scenarios

- Medical Imaging

- Anomaly Detection

- Inventory Management

Licenses

Source Model:BSD-3-CLAUSE

Deployable Model:AI-HUB-MODELS-LICENSE

Tags

- quantized

Supported Compute Devices

- Snapdragon X Elite CRD

- Snapdragon X Plus 8-Core CRD

Supported Compute Chipsets

- Snapdragon® X Elite

- Snapdragon® X Plus 8-Core

Related Models

See all modelsLooking for more? See models created by industry leaders.

Discover Model Makers