Accelerate your on-device ML development in minutes

Start developing with Qualcomm® AI Hub

Qualcomm AI Hub is the place to build and optimize ML models for Qualcomm devices. Our three products can be used independently or together as part of your on-device development workflow.

Follow the walkthrough that best fits your needs:

Getting started with Qualcomm® AI Hub Models. Browse and download pre-optimized models covering vision, speech, audio, and text applications, validated on Qualcomm® chipsets.

Getting started with Qualcomm® AI Hub Apps. Clone and customize sample application code for bundling a model to deploy on-device. Filter by domain, operating system, and more.

Getting started with Qualcomm® AI Hub Workbench. Optimize your custom trained model for a target runtime, run inference, and profile on-device performance with a few lines of code. Target 50+ hosted Qualcomm devices, provisioned within minutes.

Account Setup

To use AI Hub Workbench and export certain models from AI Hub Models, you will first need to create a Qualcomm ID and authenticate with your AI Hub API token.

Create a Qualcomm ID

If you already have a Qualcomm ID, skip this step and use your credentials to log into AI Hub Workbench. Otherwise, navigate to the Qualcomm ID sign up page and follow these steps:

- Fill in your information

- Verify your email address

- Choose your work location

At this point, you will be signed in and directed to your Qualcomm ID Profile page.

Authenticate with AI Hub

Navigate to your Workbench Settings page. Open a terminal window to complete the rest of your setup:

Install the qai-hub package via PyPi:

pip3 install qai-hubRun the following command with the API token from your Settings page:

qai-hub configure --api_token API_TOKENVerify your token setup by listing the devices we support:

qai-hub list-devices

Now you're ready to get started!

Getting Started with AI Hub Models

Download an off-the-shelf ML model that works smoothly on your device. Use AI Hub Models to browse and explore over 150 benchmarked, ready-to-run models that have been optimized for Qualcomm devices. Models come with open-source recipes on GitHub and Hugging Face.

This flow walks you through steps on how to find, demo, and download a model. For this example, we're choosing an image classification model for the Samsung Galaxy S25 and looking at LiteRT runtime metrics. LiteRT, recently rebranded from TFLite, is the recommended runtime for Android Developers; references to TFLite in AI Hub will be updated over time. These steps can be followed with any of our AI Hub Models.

- Find a model

Open Models in a new tab. Use the filters on the left side to narrow down our options:

- Under Domain/Use Case, choose Computer Vision and then Image Classification

- Under Device, select Samsung Galaxy S25

- Under Model Precision, select Quantized

- Select the EfficientNet-B0 model

- Review performance metrics

From the EfficientNet-B0 model card, choose float and TFLite to see the associated metrics for the Samsung Galaxy S25. Benchmarks are run every two weeks to ensure up-to-date model metrics across applicable runtimes.

- Demo the model locally

Follow the GitHub Model Repository link from the EfficientNet-B0 model card page and scroll down to the Example & Usage section to find these commands to test the model from your CLI:

- Install qai-hub-models

pip3 install qai-hub-models - Run the demo

python -m qai_hub_models.models.efficientnet_b0.demo - Check the demo output against the default test image.

- Try running the model against one of your own images by setting IMGPATH in the command below:

python3 -m qai_hub_models.models.efficientnet_b0.demo --image <IMGPATH>

- Install qai-hub-models

- (Optional) Demo the model on device

Export the model to auto-optimize it for on-device execution, then run the demo on a device. This step uses Workbench under the hood — if you haven't already, create a Workbench account following the instructions in Account Setup before diving in.

Export the model. This automatically runs profile and inference jobs on the model and prints out the results to the CLI. Click the job links in the printout to review full results.

python -m qai_hub_models.models.efficientnet_b0.exportRun on device. The last line of the previous step prints out a command for running the compiled model on a hosted device on sample data. Copy and paste the command, editing the device name as needed, then submit the job.

Verify the on-device model predictions that are printed out, then follow the inference job URL to see how your model performed on device.

To see complete options for the demo script, run:

python3 -m qai_hub_models.models.efficientnet_b0.demo --help

- Download model

Happy with the performance? Click Download Model, select the TFLite runtime, and download the model.

Getting Started with AI Hub Apps

One of the hardest parts of deploying on device is figuring out how get from a model to the device. AI Hub Apps bridges this gap by providing sample application code and instructions for a variety of on-device use cases and implementations. Follow the notes in each repository to choose a compatible model, configure your application, and test on device.

To complete this tutorial, you'll need to have Android Studio installed (2023.1.1 or later) and an Android device on hand. We will walk through the steps of deploying an image classification app on an Android device.

- Find an app

Open Apps in a new tab. Use the filters on the left side to narrow down our options to the associated application:

- Under Domain/Use Case, choose Computer Vision and then Image Classification

- Under Operating System, select Android

- Since we're testing on mobile, select the TFLite runtime

Select Image Classification Android to see a list of compatible models and a link to the App README

Unsure what runtime to target? We recommend choosing the runtime based on the type of device you’re working with:

- TFLIte for Mobile and IoT

- ONNX for Compute

- QNN for Auto

- Clone locally

Clone the ai-hub-apps GitHub repository and navigate to the Image Classification directory. If you don't already have Git LFS installed, set that up and make sure that your cloned repo has a .gitattributes file.

git clone https://github.com/quic/ai-hub-apps.git cd ai-hub-apps git add .gitattributes cd apps/android/ImageClassification - Add your model

If you're coming from the Models or Workbench workflows, you can reuse the model you downloaded in this step. If not, follow the link to the compatible EfficientNet-B0 model listed when you filter AI Hub Models by Image Classification and download the TFLite float version of the model. Copy your model to your working directory:

cp path/to/downloaded/classifier.tflite src/main/assets/classifier.tflite - Build app

The repository has all the code needed to build an Android app! All you need to do is to update the model reference and build with the new target.

Sync. Load Android Studio and open the ai-hub-apps/apps/android/ImageClassification folder. Once the folder is fully loaded, run gradle sync (File→Sync Project with Gradle Files).

Build for target. Select the ImageClassification folder and build it (Build→Compile `QualcommAI_Hub…ImageClassification.main`).

- Run app on device

Follow the Android Studio setup instructions for connecting to your Android device, make sure that the ImageClassification configuration is selected, and click Run from the Android Studio toolbar.

- (Optional) Customize!

The READMEs for each sample app (like this Image Classification one) provide more details on what this app code currently supports. Use our code as a starting point and modify as needed to support your use case.

Getting Started with AI Hub Workbench

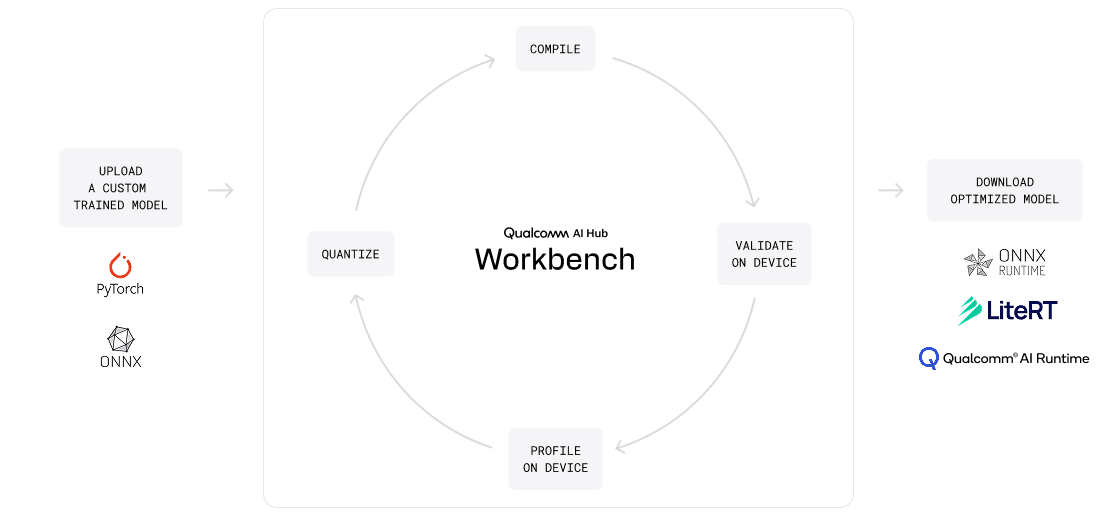

Optimize a trained model for your device. Bring an open source or custom trained model to Workbench and specify your target device and runtime for automated conversion and translation into a model ready for efficient on-device deployment.

Workbench can be used to quantize models and run inference on cloud-hosted Qualcomm devices for easy experimentation, allowing you to iteratively validate and profile to reach the ideal balance of speed, accuracy, and memory usage for your use case.

In this flow, we are optimizing the open source MobileNet model for the Samsung Galaxy S25 (Family), which uses a TFLite runtime. If you haven't already, create a Workbench account following the instructions in Account Setup before diving in.

- Set up dependencies

Open your preferred Python IDE and install any packages needed. For the MobileNet model, you can install the dependencies from AI Hub Models with this line:

pip3 install 'qai-hub[torch]' - Import and trace model

Workbench requires a traced model for compilation. We can load this pre-trained MobileNet model and go into eval mode to trace it. If you are bringing a custom trained model that has already been traced, you can skip the tracing step!

# Import torch and pre-trained MobileNet import torch from torchvision.models import mobilenet_v2 torch_model = mobilenet_v2(pretrained=True) torch_model.eval() # Trace model (for on-device deployment) input_shape = (1, 3, 224, 224) example_input = torch.rand(input_shape) traced_torch_model = torch.jit.trace(torch_model, example_input) - Select device and compile

Import Workbench, set your target device, and compile the model for TFLite. Behind the scenes, Workbench optimizes the model to work with the Qualcomm AI Stack. Follow the compile job link to access the output model, parameters, logs, and more. All jobs are all saved to your Workbench dashboard for future reference.

import qai_hub as hub device = hub.Device("Samsung Galaxy S25 (Family)") # Optimize model for the chosen device compile_job = hub.submit_compile_job( model=traced_torch_model, device=device, input_specs=dict(image=input_shape), options="--target_runtime tflite" ) target_model = compile_job.get_target_model() - Submit an inference job

Test your model on device. We'll set up the input image and run on a real cloud-hosted device — be patient with the provisioning and setup! You can click into the inference job link to see the minimum inference time and estimated peak memory usage through the Workbench web UI.

import requests import numpy as np from PIL import Image # Provide sample image sample_image_url = ( "https://qaihub-public-assets.s3.us-west-2.amazonaws.com/apidoc/input_image1.jpg" ) # Convert image to numpy array to match input specs response = requests.get(sample_image_url, stream=True) response.raw.decode_content = True image = Image.open(response.raw).resize((224, 224)) input_array = np.expand_dims( np.transpose(np.array(image, dtype=np.float32) / 255.0, (2, 0, 1)), axis=0 ) # Run inference using input image inference_job = hub.submit_inference_job( model=target_model, device=device, inputs=dict(image=[input_array]), ) on_device_output = inference_job.download_output_data() - Validate model accuracy

Post-process the on-device output to check the results against the input image.

# Post-process the on-device output output_name = list(on_device_output.keys())[0] out = on_device_output[output_name][0] on_device_probabilities = np.exp(out) / np.sum(np.exp(out), axis=1) # Read the class labels for imagenet sample_classes = "https://qaihub-public-assets.s3.us-west-2.amazonaws.com/apidoc/imagenet_classes.txt" response = requests.get(sample_classes, stream=True) response.raw.decode_content = True categories = [str(s.strip()) for s in response.raw] # Display input image from IPython import display display.Image(sample_image_url) # Print top 5 predictions for the on-device model print("Top-5 On-Device predictions:") top5_classes = np.argsort(on_device_probabilities[0], axis=0)[-5:] for c in reversed(top5_classes): print(f"{c} {categories[c]:20s} {on_device_probabilities[0][c]:>6.1%}") - Profile on-device

The inference predictions seem reasonable, so let's move on to checking how the model performs on the device with a profile job. Follow the profile job link to double check that your model is using the expected compute unit (in this case, the NPU) and explore detailed metrics such as compilation time, first app load time, memory consumption, inference time, per-layer runtime analysis, and more.

# Submit profile job profile_job = hub.submit_profile_job( model=target_model, device=device, ) Unhappy with the model accuracy? Consider trying a different precision, re-training your model, or starting from the beginning, with a different open source model.

Unhappy with the model accuracy? Consider trying a different precision, re-training your model, or starting from the beginning, with a different open source model. - (Optional) Quantize your model

Do your profile job results show too much memory consumption? If you're using an open source model, consider quantizing your model to make it smaller.

Compile to ONNX. Workbench quantize jobs require a compiled ONNX model as an input. Even if your model is already in ONNX format, compiling this way will ensure optimized patterns for quantization.

# Compile your model to ONNX compile_job = hub.submit_compile_job( model=traced_torch_model, device=device, input_specs=dict(image=input_shape), options="--target_runtime onnx" ) unquantized_onnx_model = compile_job.get_target_model()Load and pre-process calibration data. For this example, we'll use 100 sample images from the PyTorch Imagenette dataset as our calibration data. Generally, we recommend using 500-1000 samples. Before running the code below, first download imagenette_samples.zip and unzip it in your local directory.

# Load and pre-process calibration data # This transform is required for PyTorch ImageNet classifiers # Source: https://pytorch.org/hub/pytorch_vision_resnet/ import os mean = np.array([0.485, 0.456, 0.406]).reshape((3, 1, 1)) std = np.array([0.229, 0.224, 0.225]).reshape((3, 1, 1)) sample_inputs = [] images_dir = "imagenette_samples/images" for image_path in os.listdir(images_dir): image = Image.open(os.path.join(images_dir, image_path)) image = image.convert("RGB").resize(input_shape[2:]) sample_input = np.array(image).astype(np.float32) / 255.0 sample_input = np.expand_dims(np.transpose(sample_input, (2, 0, 1)), 0) sample_inputs.append(((sample_input - mean) / std).astype(np.float32)) calibration_data = dict(image=sample_inputs)Submit a quantize job. Now we’re ready to run the quantize job, in this case quantizing to INT8.

# Submit quantize job quantize_job = hub.submit_quantize_job( model=unquantized_onnx_model, calibration_data=calibration_data, weights_dtype=hub.QuantizeDtype.INT8, activations_dtype=hub.QuantizeDtype.INT8, ) quantized_onnx_model = quantize_job.get_target_model()Compile to your target runtime. Quantized models can be compiled to any runtime; compile for TFLite runtime to support your mobile device.

# Optimize model for the chosen device compile_job = hub.submit_compile_job( model=quantized_onnx_model, device=device, input_specs=dict(image=input_shape), options="--target_runtime tflite" ) target_model = compile_job.get_target_model()Validate accuracy and performance. Evaluate this quantized model for accuracy and performance by repeating steps 4-6 (submitting an inference job, checking accuracy, and submitting a profile job) from above.

If the accuracy or performance is not meeting your needs, you can also re-quantize the model at a higher precision. If you are bringing a custom-trained model, we suggest quantizing with your own compute resources using Qualcomm's PyTorch ONNX AI Model Efficiency Toolkit (AIMET) prior to running a Workbench compile job to optimize for your device.

- Download optimized model

If everything looks good, download your freshly optimized model to your machine.

target_model.download("mobilenet_v2.tflite")