DeepLabV3-Plus-MobileNet-Quantized

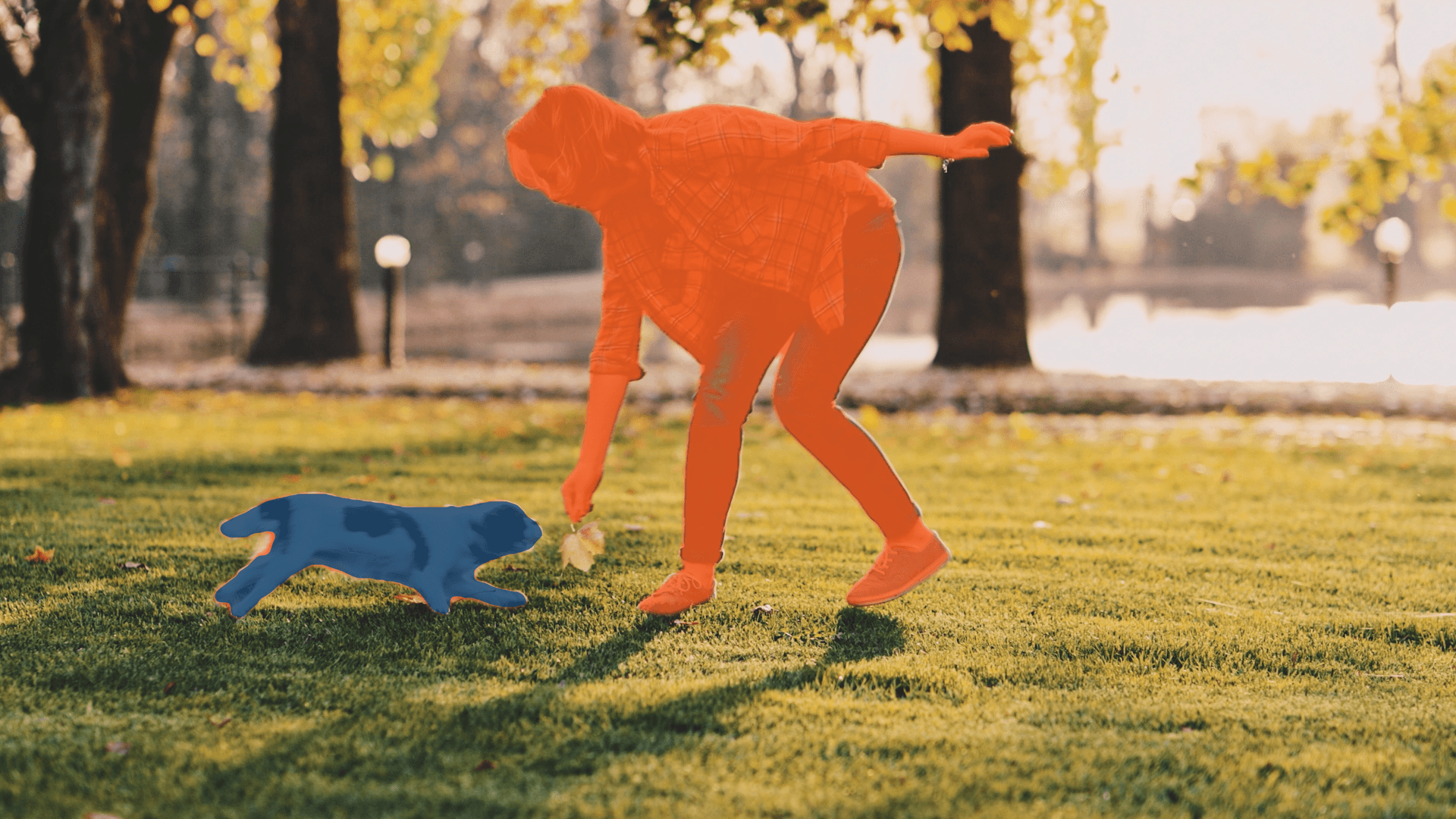

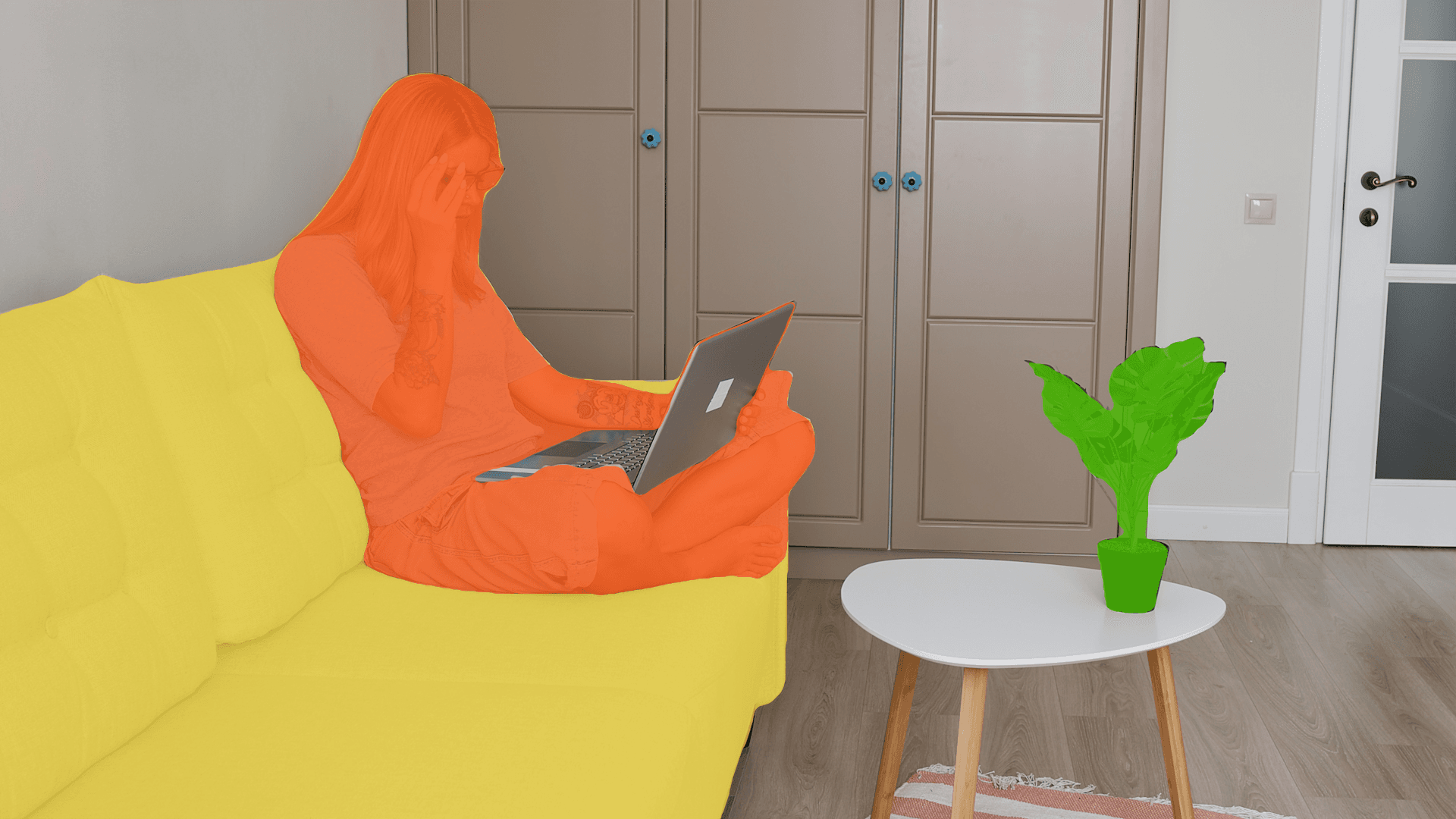

Quantized Deep Convolutional Neural Network model for semantic segmentation.

DeepLabV3 Quantized is designed for semantic segmentation at multiple scales, trained on various datasets. It uses MobileNet as a backbone.

Technical Details

Model checkpoint:VOC2012

Input resolution:513x513

Number of parameters:5.80M

Model size:6.04 MB

Number of output classes:21

Applicable Scenarios

- Anomaly Detection

- Inventory Management

Licenses

Source Model:MIT

Deployable Model:AI-HUB-MODELS-LICENSE

Tags

- quantized

Supported IoT Devices

- QCS6490 (Proxy)

- QCS8250 (Proxy)

- QCS8275 (Proxy)

- QCS8550 (Proxy)

- QCS9075 (Proxy)

- RB3 Gen 2 (Proxy)

- RB5 (Proxy)

Supported IoT Chipsets

- Qualcomm® QCS6490 (Proxy)

- Qualcomm® QCS8250 (Proxy)

- Qualcomm® QCS8275 (Proxy)

- Qualcomm® QCS8550 (Proxy)

- Qualcomm® QCS9075 (Proxy)

Related Models

See all modelsLooking for more? See models created by industry leaders.

Discover Model Makers