OpenAI-Clip

Multi‑modal foundational model for vision and language tasks like image/text similarity and for zero‑shot image classification.

Contrastive Language‑Image Pre‑Training (CLIP) uses a ViT like transformer to get visual features and a causal language model to get the text features. Both the text and visual features can then be used for a variety of zero‑shot learning tasks.

Not supported

This model is currently not supported on any IoT chipset.

To see performance metrics for this model on other chipsets, click the button below.

View for other chipsetsTechnical Details

Model checkpoint:ViT-B/16

Image input resolution:224x224

Text context length:77

Number of parameters:150M

Model size (float):571 MB

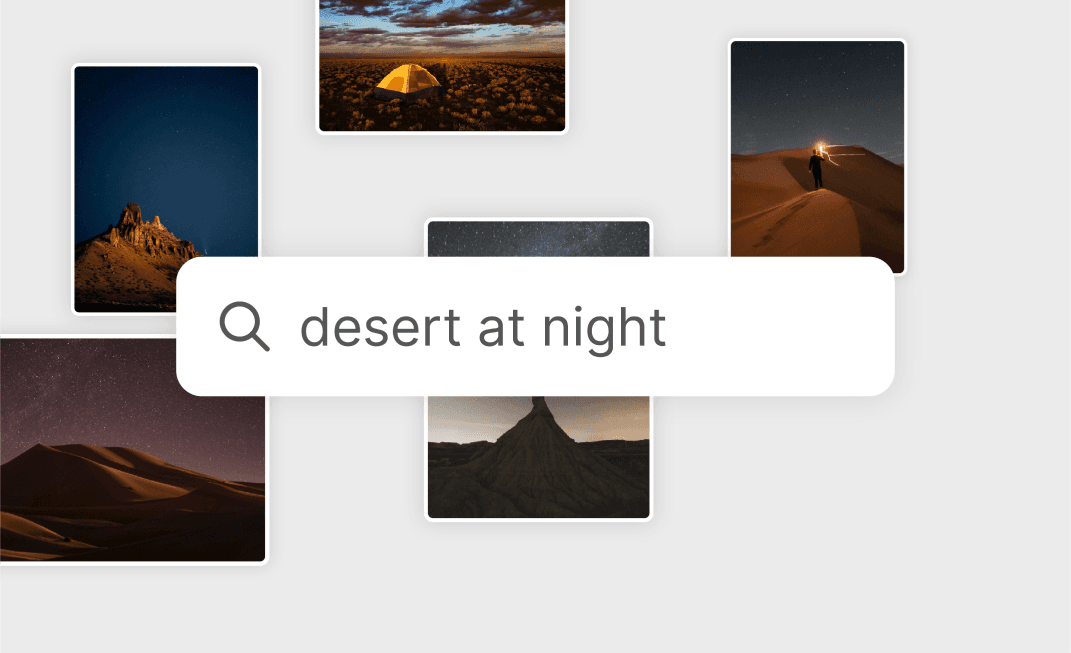

Applicable Scenarios

- Image Search

- Content Moderation

- Caption Creation

License

Model:MIT

Tags

- foundation

Supported IoT Devices

- Dragonwing IQ-9075 EVK

- Dragonwing IQ-X5121

- Dragonwing IQ-X7181

- Dragonwing Q-8750

- QCS8550 (Proxy)

Supported IoT Chipsets

- Qualcomm® QCS8550 (Proxy)

- Qualcomm® QCS9075

Looking for more? See models created by industry leaders.

Discover Model Makers